This blog has been updated on - September 01, 2023

What is big data? You've probably heard this term earlier. You may consider implementing it for enterprise data management and strategic decision-making.

The global big data market is projected to generate over $103 billion in revenues by 2027, and its current market value is around $274 billion.

To explain why everyone is going on about it, Netflix saves 1 billion USD per year on customer retention thanks to big data.

But what is this term that everyone keeps throwing around, and why are most business minds obsessed with it?

We'll dive into big data management and break it down for you.

Table of Contents:

What is Big Data? Definition and Meaning

Before we jump into big data, let's start with just data.

Data is a group of facts. These can be in the form of words, numbers, observations, descriptions, or measurements.

It can be subdivided into qualitative and quantitative data. Qualitative data is descriptive.

Let's say, "A person's blood type is B+, they have blonde hair, and they drive a Mercedes." That is an example of qualitative data.

Whereas quantitative data is numerical. For example, "97% of a high school class is going to college" or "80 of the 100 shoes sold on Thursday were blue."

There are many ways of collecting data, such as direct observation and surveys.

However, data is also a group of facts. Hence, "The data is accurate" also works!

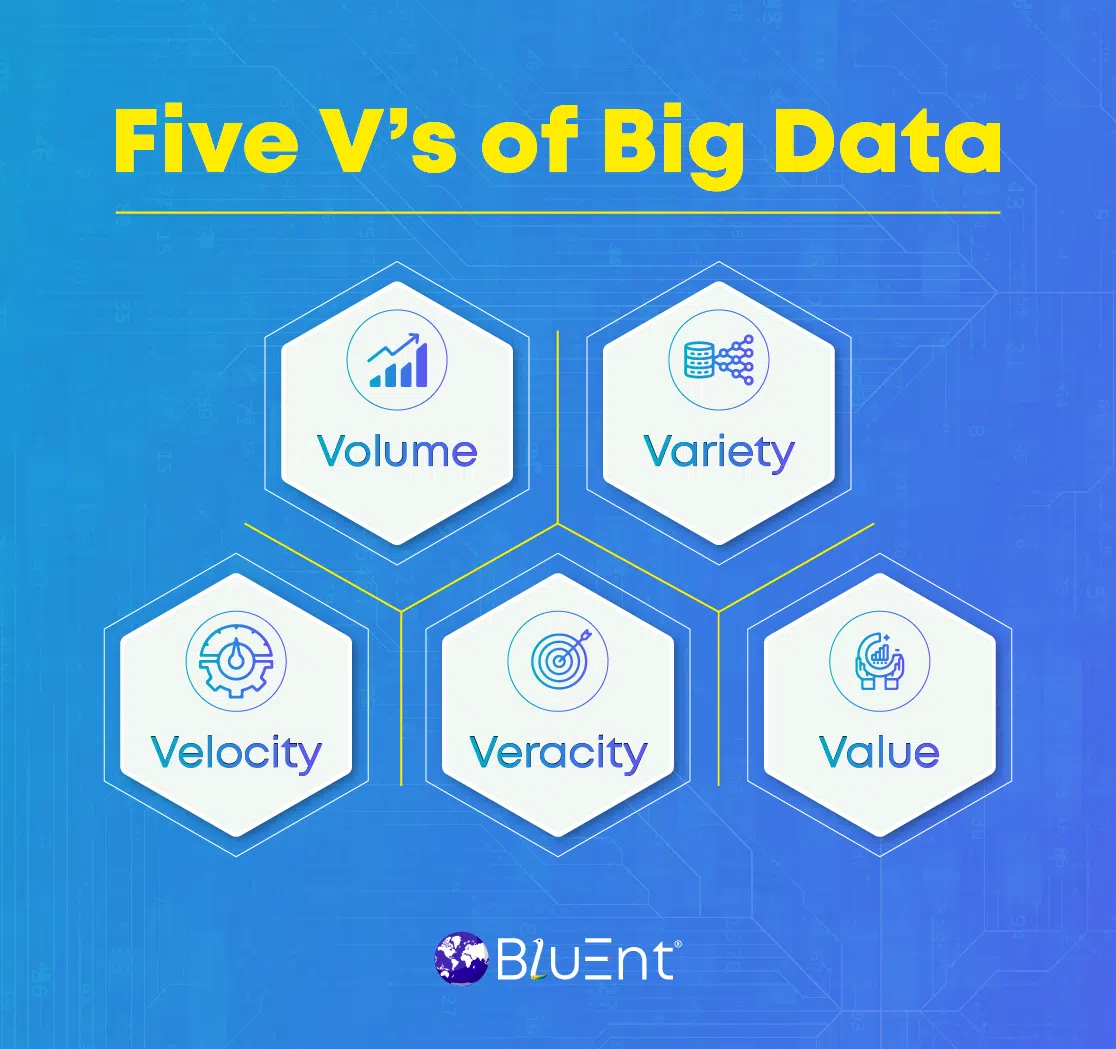

Big data can be considered a subdivision of data. The size of big data is so large that traditional methods and data management tools cannot apply. It is defined by 5 V's: volume, veracity, variety, velocity, and value.

A former analyst at Gartner, Doug Laney, coined three V's of data to define its characteristics.

Let's give you a quick brief on what these 5 Vs of data mean:

Volume: Big data is not confined to a limited volume or particular value. It can be as small as a bit and as large as terabytes or more.

Variety: This includes structured and unstructured data for business decisions.

Velocity: Big data also outlines real-time data reports and insights to meet the ever-changing business needs.

Veracity: This relates to the reliability and accuracy of data sets and insights generated.

Value: Companies get to learn the positive impact of data in terms of strategic decisions, customer retention, organizational efficiency, revenue growth, etc.

Recommended Reading:

What is Big Data Technology Used For?

Data implementation opens doors to vast opportunities.

By 2027, the data market revenues will be two times higher than the profits made in 2019. That also justifies the study that its global industry value surged from 169 billion in 2018 to $274 billion in 2022.

Data analytics and its types have many use cases in healthcare, governance, retail, supply chain, manufacturing, gaming, human resources, etc.

As an example, for enterprises, data companies can broadly help in the following five ways:

-

Understanding customers,

-

Making better decisions,

-

Developing better products and services.

-

Improving operations.

-

And driving income.

Data analytics in healthcare can be used to improve treatment and monitoring. Other uses include fraud detection and handling, advertising, and creating the right content for entertainment.

Standard data management and analysis techniques include predictive analytics, AI, machine learning, and data mining.

Some Important Big Data Examples

There are ample examples of data in the real world.

Every enterprise somehow deals with user-generated data from customers, employees, target audiences, company growth values, etc.

Look at the following examples of big data to see how some leading organizations are using it:

-

A California-based company, Centerfield, uses customer data analytics to get insights into the preferences of existing clients. That enabled the firm to refine its sales and marketing tactics to reach more potential clients.

-

Netflix uses predictive analytics to forecast the failure rates of TV shows and programs to make the right investment decisions.

-

A Chicago-based healthcare firm, Tempus, uses data-driven solutions to automate real-time clinical reporting, appointments, and multiple medical records. It also relies on predictive analytics for developing healthcare management systems.

Take advantage of data to make data-driven decisions and future-proof your enterprise from market uncertainties.

Discover data analytics and insightsCritical Components of Big Data

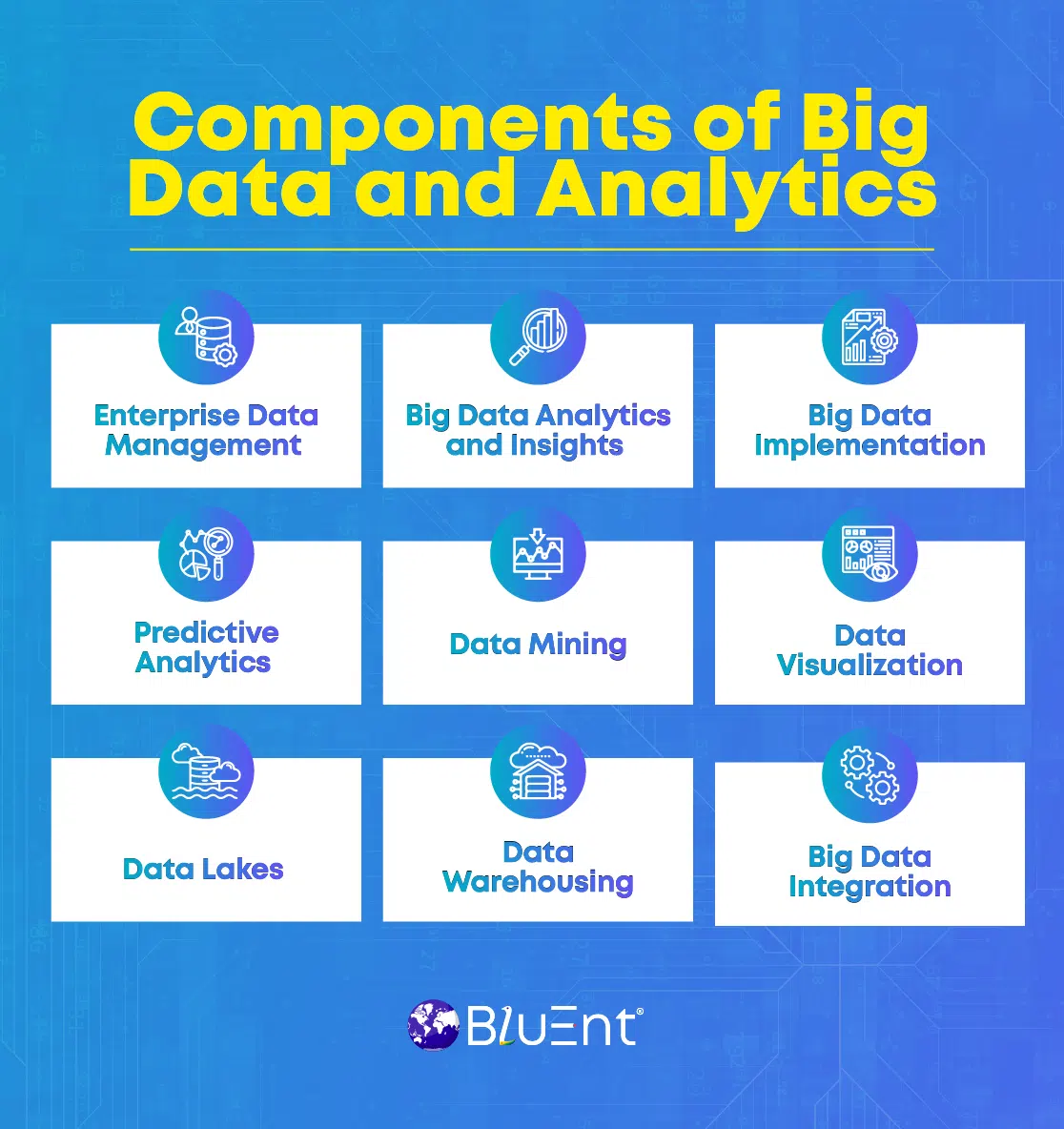

Let's discuss the core components of big data and services.

Enterprise Data Management

It's a set of practices to let businesses access, integrate, share, and control data seamlessly across multiple internal systems, applications, and software.

Data Analytics and Insights

Data analytics involves various methods and tools to collect information from different datasets and generate real-time reports for deep analysis and insights. Enterprises can further use them for decision-making and risk management.

Big Data Implementation

Enterprises opt for data implementation to deploy data-driven resources into their internal strategies and operations. That provides better ways to solve problems and intelligent decision-making.

Predictive Analytics

Predictive analytics in business enables users to understand buyers' preferences, demand forecasting, pricing changes, marketing budget forecasting, and more.

Data Mining

It's also called Knowledge Discovery in Data (KDD). It is the process of identifying and discovering patterns in data.

Data Visualization

It relates to graphics such as charts that portray data. That makes it easier to analyze and understand.

Data Lakes

As the name suggests, it is a central pool or repository where you can store structured and unstructured data—considered complementary to data warehouses.

Data Warehousing

Data warehousing is a digital storage system or repository that collects large amounts of data from various sources. It stores both historical and current data in one place.

Enterprise Data Integration

It's all about gathering data from multiple sources and sharing it across internal applications, software, and systems to generate reports in real-time.

Key Takeaways: Drive Data-driven Innovations with BluEnt's Data Analytics Solutions

And now you have a starter pack for data analytics.

However, only some companies will have the resources or time to manage their data alone. For them, BluEnt's team of certified data analysts and engineers handles your data needs.

Our professionals will work with you to improve your products and services, streamline your internal processes, boost your marketing, and identify your customers' behavior patterns.

At BluEnt, we serve Fortune, energy, homebuilders, tech companies, SMEs, healthcare institutions, research and analyst firms, Fintech brands, retail, security, and human resource ventures.

Our business services include business process management, business intelligence, CRM solutions, enterprise mobility, enterprise resource management, enterprise content management, and enterprise data management.

Ready to increase your ROI with data analytics and insights tailored to your business needs? Contact us now.

Frequently Asked Questions

What are the most popular big data tools to use?

Data tools help people to analyze information. They help make the process of data analytics more cost and time-effective.

1. Hadoop

This name pops up in almost any article about data technologies and tools.

Apache Hadoop is an open-source framework that allows you to process data across clusters of computers. It was developed in response to the difficulty of storing, processing, and retrieving big data.

Advantages: Includes cost efficiency, scalability, flexibility, faster processing and storage, processing power, and fault tolerance.

Challenges: This may include a steep learning curve, a need for comprehensive tools for management, governance, and metadata, and some data security issues.

Companies that use or have used Hadoop for data analytics include Marks and Spencer, British Airways, Royal Mail, and Expedia.

2. Storm

This is another free, open-source system. It is primarily a solution for processing real-time streams.

Advantages: Easy to implement, dynamic scaling, integrated with any programming language and Hadoop, can process 1 million 100-byte messages per node per second.

Challenges: Can solve only one type of problem (Stream processing), few resources available in the market, and complex for developers to develop apps.

Companies that use or have used Apache Storm include Spotify, Twitter, Yelp, and Okta.

3. High-Performance Computing Cluster (HPCC)

It was developed by LexisNexis Risk Solution. It is also called DAS (Data Analytics Supercomputer). It is a free tool.

Advantages: Includes parallel data processing, scalable, agile, comprehensive, and cost-effective.

Challenges: Delivers only on a single programming language, architecture, and platform.

Companies that use or have used HPCC include Infosys, Fraud Defense Network, and Citrix.

What are big data management challenges, and how can they be fixed?

Many businesses need help managing and analyzing their data. Here are a few common pain points and ways to fix them:

Lack of New Insights: This can occur due to inadequate data, or a system developed for batch processing when you want real-time insights.

For the first cause, run a data audit. The integration of new data sources can help. Furthermore, it examines how raw data comes into the system. You can include a data lake if data storage diversity is an issue.

For the second, check whether your extract, transform, and load (ETL) can process your data more frequently. You can also use the Lambda Architecture approach to combine a quick real-time stream with the traditional pipeline.

Inaccurate Analytics: This is primarily due to poor source data quality and system defects regarding the data flow. These issues simplify the data validation process, verification of the development life cycle, and quality management.

Expensive Maintenance: You can usually chalk this down to outdated technologies and only use some system capabilities. The best thing you can do is move on to new big data technologies, optimize your processes, and revise your business metrics.

Predictive Analytics in Finance; A Truly Transformative Idea

Predictive Analytics in Finance; A Truly Transformative Idea  Data Visualization in Finance: Telling Better Stories and Helping Make Even Better Decisions

Data Visualization in Finance: Telling Better Stories and Helping Make Even Better Decisions  Big Data Applications in Healthcare: An Overview

Big Data Applications in Healthcare: An Overview  E-Commerce Analytics: Analyzing Data for Business Success

E-Commerce Analytics: Analyzing Data for Business Success